Hopper visualizes 4.4 billion flight prices with pure Python orchestration

Challenge

Visualizing billions of flight itineraries without painful debugging

Hopper is a global travel platform that uses data to help millions of users find the best flight and hotel deals, address pricing volatility, and handle trip disruptions. Hopper collects approximately 10 billion flight itineraries every day to power price predictions and recommendations in their travel app. The team wanted to create an interactive map where each pixel represents a flight itinerary's price, organized using a sophisticated nested spiral layout based on phyllotaxis patterns found in nature. They call this a Deep Zoom visualization.

The technical challenge was substantial:

- Process 4.4 billion trip records (representing just 1% of one week's data)

- Reorganize randomly distributed pixels into spatial tiles

- Build a resolution pyramid with multiple zoom levels

The workflow required pulling data from BigQuery, enriching it with lookup tables, sorting billions of pixels by tile location, aggregating them into images, and generating progressively downsampled versions.

Patrick Surry, a data scientist at Hopper with experience in Spark and Ray, knew this type of distributed processing could be tricky. He initially attempted the implementation with Ray but ran into deadlocking issues. As he described it:

"Ray was opaque and stuff would break. It was hard to understand why it was breaking and hard to know what to do to fix it."

Even with prior experience with Spark, Patrick knew that getting distributed jobs right "took days to actually get things to run, let alone without failing." The team needed orchestration that provided algorithmic control without the infrastructure complexity.

“I can just write async Python, and it's going to run on multiple containers instead of threads on my one machine. I'm like, wow, that's kind of amazing.”

Patrick Surry

Chief Data Scientist, Hopper

Solution

Union.ai delivered Python-native orchestration with container reuse.

With Union.ai, Hopper implemented their MapReduce-style shuffle algorithm in pure async Python. The breakthrough came from Union's async-native design, which enabled the team to build sophisticated distributed algorithms without the operational overhead.

Some key technical heroes stood out:

Pure Python Async Patterns

Unlike traditional frameworks that require thinking in "parallel joins and maps and reduces," Union.ai let the team implement the exact algorithm they wanted using familiar async/await syntax.

Patrick noted: "If you understand the async programming model in Python, then it just lets you run stuff faster without having to think about where it will run."

Algorithmic Control with Composability

The team leveraged async composability to optimize their data pipeline. Rather than using framework primitives that require pulling all data before enrichment can occur, they created a task that pulls BigQuery streams and performs joins in parallel, largely avoiding the need to materialize the full dataset:

This pattern eliminated the need to wait for all data pulls to complete before starting enrichment, improving overall pipeline efficiency.

Multi-Level Shuffle Algorithm

The workflow implements a hierarchical shuffle (8 → 64 → 512 partitions) to avoid the O(N²) file operations that would result from direct partitioning to thousands of tiles. Different phases require different worker counts, and Union’s auto-scaling handled this transparently:

Reusable Containers for Efficiency

With thousands of short-lived tasks (often just a few seconds long), container startup overhead would have been devastating. Union’s reusable containers eliminated this waste:

The auto-scaling range (2-64 replicas) adapted dynamically across multiple dimensions. Within a single workflow run, different shuffle phases required different parallelism. Across different dataset sizes, the same configuration handled test runs with 1 million trips when fewer workers were required, and automatically scaled to handle 4.4 billion trips without any code changes.

As Patrick explained:

"It means I can run these much smaller grain tasks. So I can have tasks that only take a second or two and they actually run fast instead of having big startup time... I don't have to think so much about the granularity of tasks."

Optimized I/O with DataFrame References

Tasks pass flyte.io.DataFrame objects between stages, which are automatically offloaded to GCS. This meant working with file references to avoid memory overload, while materializing individual chunks only when needed. Union's optimized downloads from object storage achieve speeds up to 4x faster than v1 (e.g., 2GB in 5 seconds).

Simplified Image Building

The team defined their container environment without writing a Dockerfile:

"It's kind of amazing to me that I got this to work, and I really don't understand anything about the image that it's running on."

The image builds happened seamlessly in the background, either locally or remotely on the Union cluster.

Integrated App Serving

Once tiles were generated and saved to GCS, the team deployed a FastAPI application to serve the visualization, with Union handling authentication, authorization, auto-scaling, scaling to zero, and monitoring—all without additional infrastructure setup.

of dev time

concurrent executions

trip records processed

Results

Hopper delivered a production-ready visualization in just one day

Patrick migrated from a Ray implementation that couldn't scale past its deadlocking issues to a working, production-ready Union.ai implementation in approximately one day of focused effort. The workflow executed 22,300 total tasks, with concurrent bursts of 3,000 executions during data ingestion and enrichment, and 7000 executions during tile generation and upload to GCS.

Perhaps most impressively, the algorithm scaled seamlessly without code changes:

"I got to something that was a good scalable algorithm and then I just put more data into it and it kept working. I ran it with four billion examples and that worked on the first attempt."

The workflow-to-visualization pipeline was a perfect example of Union's end-to-end capabilities. Once tiles were generated and saved to GCS, the team deployed a FastAPI application on Union's serving platform to pull and render the data. Union.ai automatically managed authentication, authorization, and auto-scaling to handle both high traffic and scaling to zero during idle periods.

“Having a controlled environment like Union.ai... provides a nice way to be able to share stuff that you're working on with other people."

Serving the Visualization: Making Billions Comprehensible

The visualization uses the Deep Zoom Image (DZI) format consisting of a tiled image pyramid where the massive image is split into thousands of 256×256 pixel tiles at multiple zoom levels. OpenSeadragon, a JavaScript viewer, loads only the visible tiles on demand as users pan and zoom, making it possible to navigate billions of pixels smoothly in a browser.

A FastAPI application serves three types of resources: the HTML viewer, CSV files containing airport and route metadata for interactive labels, and the image tiles themselves pulled directly from GCS. Union's app serving platform deployed this with a simple declarative configuration:

Union.ai handled authentication, auto-scaling, and serving—transforming the FastAPI app into a production-ready endpoint with zero infrastructure configuration.

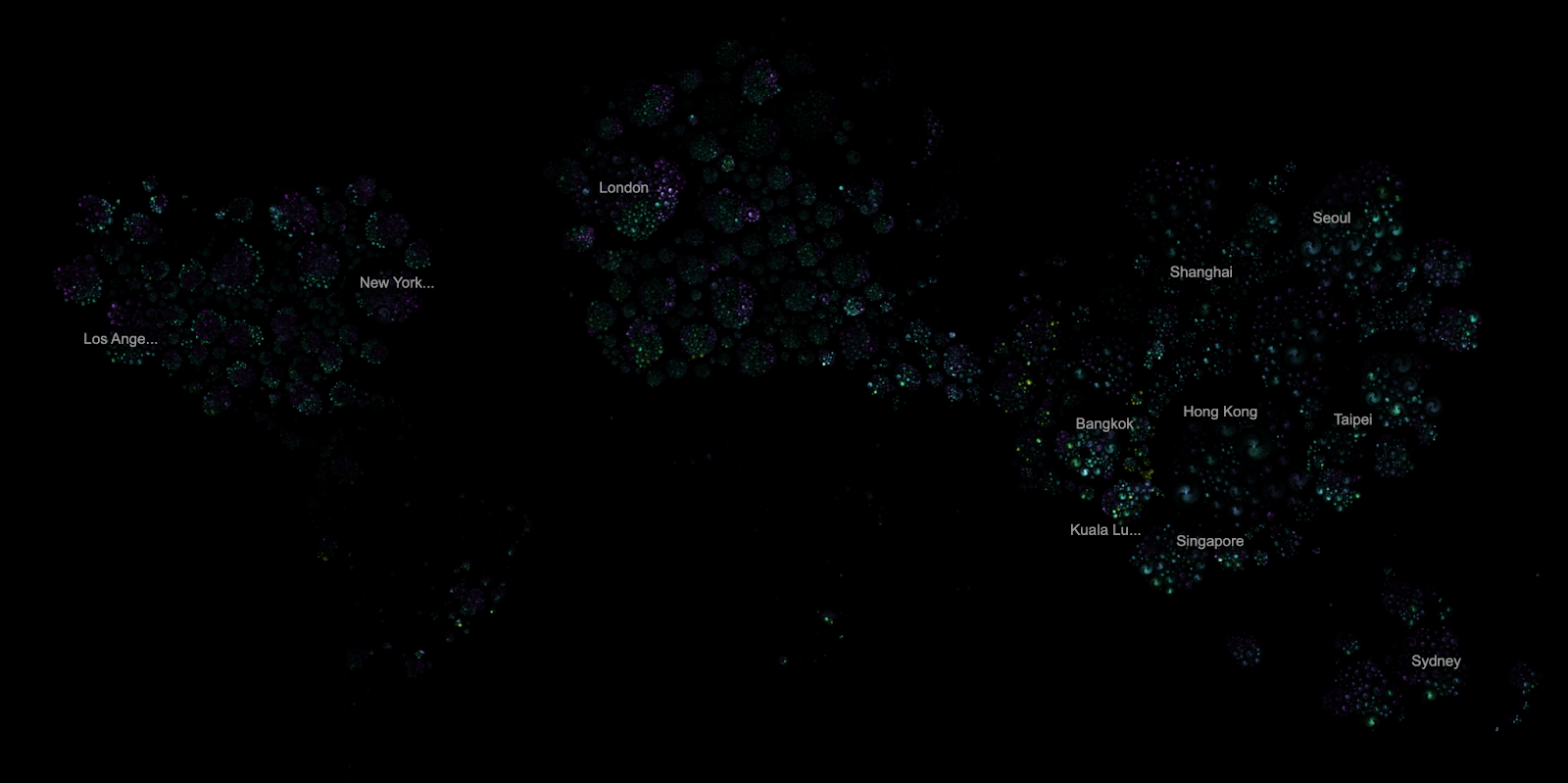

The resulting Deep Zoom visualization transforms abstract numbers into intuitive visual patterns. Using phyllotaxis-based nested spirals (the same mathematical patterns found in sunflower seeds and pine cones) the team encoded multiple dimensions into each pixel: advance booking time, day of week, flight type, trip duration, and departure times.

When zoomed out, the app displays all 4.4 billion flight prices as a "universe" of galactic clusters, with each origin city forming its own cluster, loosely organized geographically.

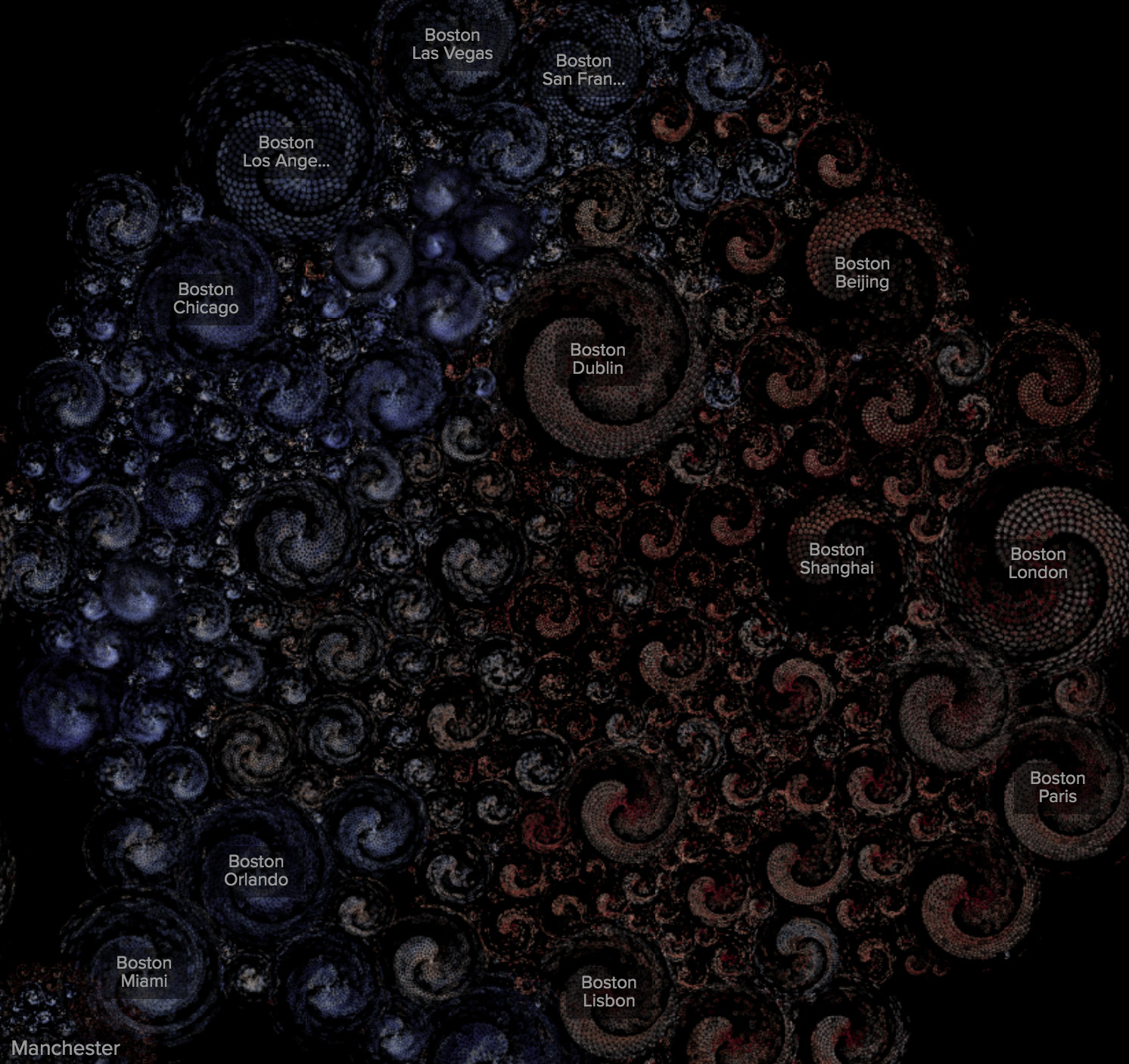

Zooming into a single origin like Boston reveals individual "galaxies," each representing a destination market, organized by geographic proximity and destination similarity (warm island destinations naturally cluster together).

Zooming further into a destination market will reveal characteristic "flower" patterns for specific departure dates, with petals representing different arrival and departure time combinations.

You can view a live reproduction of this visualization on Union's infrastructure here:

Looking Ahead

The 4.4 billion trips represent just 1% of one week's data from Hopper's archive. With plans to scale to 100x this volume and apply similar distributed processing patterns to their ML workflows (including deep learning models for flight price prediction) the team is confident in Union.ai being their partner at scale.